What Are Model Interpretability Techniques in AI (2026)? SHAP, LIME, Feature Importance

Model interpretability techniques are methods that help humans understand how an artificial intelligence model makes decisions. These techniques reveal which inputs influence predictions, how strongly they influence them, and why the model produces a specific outcome. In simple terms, they turn a “black box” into a system you can examine, trust, and improve.

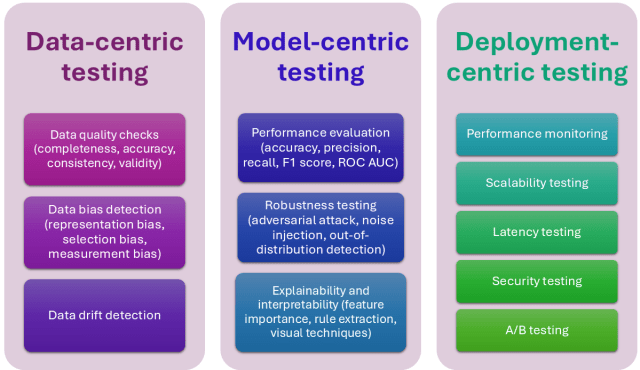

In modern AI model interpretability, data scientists use techniques such as feature importance, SHAP (Shapley Additive Explanations), LIME (Local Interpretable Model-agnostic Explanations), partial dependence plots, surrogate models, counterfactual explanations, and saliency maps. Each technique helps answer a different question about model behavior. For example, feature importance shows which variables matter most, while SHAP explains how each feature contributed to a single prediction.

Understanding the model interpretability meaning goes beyond curiosity. It enables bias detection, model debugging, and regulatory compliance. Without these methods, artificial intelligence interpretation becomes guesswork, and organizations risk deploying systems they cannot justify or trust.

Model Interpretability vs Explainability: What Most People Get Wrong

Many people use model interpretability vs explainability as if they mean the same thing, but they solve different problems. Interpretability describes how easily you can understand how a model works internally. Explainability focuses on how well you can explain why the model made a specific decision.

Interpretability answers questions like: “Which features drive this model overall?” Explainability answers questions like: “Why did the model reject this loan application?”

For example, a decision tree offers strong interpretability because you can follow its decision path step by step. A deep neural network lacks interpretability because its internal logic remains complex and hidden. However, you can still achieve model explainability using tools like SHAP or LIME, which explain individual predictions even when the model itself remains complex.

This distinction also shapes the debate around explainable vs interpretable AI. Interpretable models provide transparency by design. Explainable AI, often called explanable AI or XAI, adds explanatory tools after training to help humans understand predictions.

In practice, organizations need both. Interpretability helps engineers trust and improve the model. Explainability helps stakeholders, regulators, and users understand and accept its decisions.

Why AI Model Interpretability Is No Longer Optional

AI model interpretability now sits at the center of responsible machine learning. Organizations no longer deploy models blindly. They must understand how systems make decisions before they trust them in real-world environments.

First, interpretability helps teams detect bias early. If a hiring model ranks candidates unfairly, interpretability techniques can reveal whether factors like age, gender, or location influence predictions improperly. This visibility allows teams to correct problems before deployment.

Second, interpretability improves model performance. Engineers can inspect which features drive predictions and identify errors, data leakage, or irrelevant inputs. Without AI interpretability, teams often waste time optimizing models that rely on the wrong signals.

Third, model explainability supports regulatory and business requirements. Financial institutions, healthcare providers, and insurance companies must justify automated decisions. They must show why a model approved a loan, flagged fraud, or predicted a medical risk.

Finally, interpretability builds trust. Stakeholders adopt AI faster when they understand it. Leaders approve deployment when they see clear explanations. Users accept decisions when they know the reasoning behind them.

In 2026, teams no longer treat interpretability as optional. They treat it as a core requirement for deploying reliable and accountable AI systems.

RELATED ARTICLE: Best Cloud Service Providers in 2026

7 Model Interpretability Techniques Every Machine Learning Engineer Should Know

Model interpretability techniques in machine learning allow you to inspect, validate, and interpret the model instead of treating it like a black box. Each technique answers a different question, so you must choose the right one based on your goal, model type, and data.

1. Feature Importance

Feature importance ranks input variables based on how much they influence predictions. It helps you identify which features drive model behavior.

For example, in a credit scoring model, feature importance may show that credit score influences decisions more than income. This insight helps engineers interpret the model and verify whether it relies on logical factors.

Tree-based models such as Random Forest and XGBoost provide built-in feature importance scores. Permutation feature importance works as a model agnostic alternative and applies to any model.

This technique provides a global view of model behavior.

2. SHAP (Shapley Additive Explanations)

SHAP stands among the most powerful explanatory tools in ai interpretability. It assigns each feature a contribution value for a specific prediction.

SHAP answers questions like:

Why did the model predict fraud for this transaction?

It shows whether each feature pushed the prediction higher or lower.

Unlike simple feature importance, SHAP explains individual predictions and global behavior. This makes it one of the most reliable tools for model interpretability techniques in AI.

3. LIME (Local Interpretable Model-agnostic Explanations)

LIME explains individual predictions by building a simple model that approximates the complex model locally.

This model agnostic technique works with any machine learning model, including neural networks.

For example, if an image classifier predicts cancer from a scan, LIME highlights which regions influenced the decision.

LIME helps teams interpret AI systems when internal model logic remains complex or inaccessible.

4. Partial Dependence Plots (PDP)

Partial dependence plots show how a single feature affects predictions while holding other features constant.

This technique helps engineers interpret AI behavior globally.

For example, PDP can reveal how age affects loan approval probability. The plot may show approval rates increase sharply after a certain income level.

This technique helps teams understand feature-prediction relationships visually.

5. Surrogate Models

Surrogate models improve model explainability by approximating complex models with simpler ones.

Engineers train an interpretable model, such as a decision tree, to mimic the original model’s predictions.

They then analyze the surrogate model to understand how the original model behaves.

This approach works especially well for complex black-box systems.

6. Counterfactual Explanations

Counterfactual explanations show what must change to produce a different prediction.

They answer questions like:

What must change for the loan to get approved?

For example, the model may reveal that increasing income by $5,000 would change the decision.

This technique provides actionable artificial intelligence interpretation instead of abstract analysis.

7. Saliency Maps (Deep Learning Interpretability)

Saliency maps rank among the most important model interpretability techniques in deep learning. They highlight which parts of input data influence predictions.

In image models, saliency maps show which pixels affected classification.

In text models, they highlight influential words.

This helps engineers understand neural network behavior visually.

Together, these model interpretability techniques form the foundation of modern AI model interpretability. They allow teams to interpret the model confidently, validate its logic, and deploy it responsibly.

READ MORE: What Is a Data Center? What You Should Know in 2026

Model Agnostic vs Model Specific Interpretability Techniques

You must understand the difference between model agnostic vs model specific techniques before you choose how to interpret the model. This distinction determines whether a technique works with any model or only with certain algorithms.

Model agnostic interpretability techniques work with any machine learning model. They treat the model as a black box and analyze its inputs and outputs instead of its internal structure. Popular model agnostic methods include SHAP, LIME, permutation feature importance, and counterfactual explanations. These techniques help teams achieve model explainability even when the model uses complex architectures like neural networks or ensemble systems.

For example, you can use SHAP to explain predictions from XGBoost, neural networks, or logistic regression without modifying the model itself.

Model specific techniques only work with certain model types. They rely on internal model structure to provide explanations. Linear regression uses coefficients to show feature impact. Decision trees allow you to trace the exact path that led to a prediction. Neural networks use attention weights and saliency maps to highlight important inputs.

Model specific techniques often provide faster and more direct explanations, but they lack flexibility. Model agnostic techniques provide broader coverage and stronger consistency across systems.

In practice, teams combine both approaches. They use model specific methods when possible and apply model agnostic techniques when working with complex or production-level AI systems.

Best Explainable AI Tools and XAI Tools in Python

Modern explainable AI tools make it easier to apply model interpretability techniques in Python without building everything from scratch. These libraries help engineers interpret AI systems, visualize feature impact, and deliver reliable model explainability in production.

1. SHAP

SHAP ranks among the most widely used AI explainability tools for interpretable machine learning with Python. It calculates precise feature contribution values for individual predictions and global model behavior.

Engineers use SHAP to:

- Explain credit decisions

- Debug model errors

- Identify biased features

SHAP supports tree models, neural networks, and linear models, making it one of the most versatile explanatory tools available.

2. LIME

LIME provides a simple way to interpret AI predictions locally. It builds a lightweight interpretable model around a single prediction and reveals which features influenced the result.

LIME works well for:

- Tabular models

- Text classifiers

- Image models

Because LIME uses a model agnostic approach, it integrates easily with almost any machine learning pipeline.

3. InterpretML

InterpretML stands out among XAI tools because it provides both interpretable models and explanation tools. It allows teams to train transparent models and apply post-hoc explanations to black-box systems.

It helps teams:

- Interpret the model globally

- Explain individual predictions

- Compare model explainability across systems

This makes it ideal for regulated industries.

4. Alibi

Alibi focuses on advanced AI model interpretability and counterfactual explanations. It helps engineers understand what changes would alter a prediction.

Teams use Alibi to:

- Generate counterfactual explanations

- Detect model bias

- Improve decision transparency

This makes it valuable for finance, healthcare, and risk modeling.

5. ELI5

ELI5 helps engineers interpret machine learning models by showing feature weights, importance scores, and prediction explanations.

It supports:

- Scikit-learn models

- Text classifiers

- Tree-based models

ELI5 simplifies artificial intelligence interpretation and helps engineers validate model behavior quickly.

These explainable AI tools allow teams to implement model interpretability techniques Python workflows efficiently. Instead of guessing how models behave, engineers can interpret AI systems clearly, validate decisions, and deploy trustworthy machine learning solutions.

ALSO SEE: API Integration 2026: How It Works, Benefits, and Examples

How to Choose the Right Model Interpretability Technique

You should choose a model interpretability technique based on what you want to learn from the model. Different techniques answer different questions, and using the wrong one can lead to misleading conclusions.

If you want to understand which features influence the model overall, use feature importance or partial dependence plots. These techniques provide a global view of AI model interpretability and help you see the main drivers behind predictions.

If you want to explain why the model made a specific decision, use SHAP or LIME. These techniques show how individual features influenced a single prediction. For example, SHAP can reveal why a fraud detection model flagged a transaction.

If you work with deep learning models, use saliency maps. These techniques help you interpret the model visually by showing which parts of the input affected the output.

If you need actionable explanations, use counterfactual explanations. They show what changes would produce a different result, which helps businesses make practical decisions.

You should also consider whether you need a model agnostic solution. Model agnostic techniques like SHAP and LIME work across different model types and provide flexibility in production systems.

In practice, teams rarely rely on one method. They combine multiple techniques to interpret AI systems completely, validate predictions, and ensure reliable model explainability.

MORE: What Is Data Quality Management – DQM (2026)?

Best Practices for Reliable Model Explainability

You should follow proven best practices when applying model interpretability techniques to avoid false conclusions. Interpretability tools can mislead you if you use them incorrectly, so you must validate every explanation carefully.

Start with simple models when possible.

Linear models and decision trees offer built-in transparency and help you understand feature relationships quickly. Even if you later deploy complex systems, simple models provide a strong baseline for comparison.

Use multiple explainable AI tools instead of relying on one method.

SHAP, LIME, and feature importance each reveal different aspects of model behavior. When multiple methods point to the same conclusion, you gain stronger confidence in the explanation.

Check explanation stability.

Small changes in input data should not completely change feature importance rankings. Unstable explanations often signal weak models, poor data quality, or overfitting.

Validate explanations against domain knowledge.

Subject matter experts should review explanations to confirm they align with real-world logic. This step strengthens trust and improves artificial intelligence interpretation.

Document your findings clearly.

Teams must record how they interpret the model, which explainable AI tools they used, and what conclusions they reached. This documentation supports audits, regulatory compliance, and long-term model reliability.

When teams follow these practices, they improve AI interpretability, strengthen model explainability, and deploy machine learning systems with confidence.

Final Thoughts…

Model interpretability techniques allow you to understand, trust, and improve modern AI systems. They help you identify which features drive predictions, explain individual decisions, and detect hidden risks before deployment. Without strong AI model interpretability, even the most accurate model can become dangerous, unreliable, or impossible to justify.

Techniques such as SHAP, LIME, feature importance, and saliency maps give you the ability to interpret the model from different angles. Some methods explain global behavior, while others focus on individual predictions. Together, they provide complete model explainability and help teams deploy artificial intelligence responsibly.

As AI adoption accelerates, interpretability will separate trustworthy systems from risky ones. Teams that master model interpretability techniques will build better models, gain stakeholder trust, and meet growing regulatory expectations.

In 2026 and beyond, you cannot afford to treat interpretability as optional. You must treat it as a core part of building reliable and accountable AI.

Ready to Strengthen Your AI Model Interpretability and Explainability Strategy?

As AI systems take on more critical decisions, you can no longer afford to deploy models you don’t fully understand. Strong model interpretability techniques help you detect bias, improve model performance, meet regulatory requirements, and build trust with stakeholders.

Whether you want to implement SHAP, LIME, and other explainable AI tools, interpret complex machine learning models, or ensure your AI systems remain transparent and accountable, the right interpretability strategy gives you a powerful advantage.

Tolulope Michael has helped organizations, data teams, and AI leaders implement reliable AI model interpretability frameworks, enabling them to understand their models deeply, reduce risk, and deploy AI with confidence.

Book a One-on-One AI Model Interpretability Consultation with Tolulope Michael

If you need help interpreting your machine learning models, selecting the right explainable AI tools, validating model explanations, or building trustworthy AI systems, a short consultation will give you clear, practical steps to improve your model transparency, strengthen compliance, and elevate your AI deployment strategy.

FAQ

What are the main 3 types of ML models?

The main three types of machine learning models are supervised learning models, unsupervised learning models, and reinforcement learning models.

Supervised learning models learn from labeled data. They make predictions based on known input and output relationships. Examples include linear regression, logistic regression, and Random Forest.

Unsupervised learning models find patterns in unlabeled data. They group data or reduce its complexity without predefined answers. Examples include K-means clustering and principal component analysis.

Reinforcement learning models learn through interaction and feedback. They improve performance by receiving rewards or penalties for their actions. These models are widely used in robotics, gaming, and autonomous systems.

Each type serves different purposes depending on the problem and data available.

What are the 4 methods of interpretation?

The four common methods of interpretation in machine learning are feature importance, local explanation methods, global explanation methods, and visual explanation techniques.

Feature importance ranks features based on their impact on model predictions. It helps identify which variables influence decisions the most.

Local explanation methods, such as SHAP and LIME, explain individual predictions. They show why the model produced a specific result.

Global explanation methods, such as partial dependence plots, explain how the model behaves overall.

Visual explanation techniques, such as saliency maps, highlight which parts of input data influence predictions, especially in deep learning models.

These methods help data scientists understand and validate model behavior.

What is an example of an interpretive approach?

A common example of an interpretive approach is using SHAP to explain a loan approval model.

For example, if a model rejects a loan application, SHAP can show that low income reduced the approval score, while a strong credit history increased it. This explanation helps both engineers and decision-makers understand exactly why the model made that decision.

This approach improves transparency, helps detect bias, and supports better decision-making.

Is ChatGPT a machine learning model?

Yes, ChatGPT is a machine learning model. Specifically, it is a large language model built using deep learning and trained on vast amounts of text data.

ChatGPT uses a neural network architecture called a transformer to understand language patterns and generate human-like responses. It learns from data during training and improves performance through optimization techniques.

Because of its complexity, developers often apply explainable AI tools and interpretability techniques to understand and monitor its behavior.